Overview

When multiple AI agents work together, are they a genuine team—or just a collection of individual agents running in parallel? This question matters enormously for how we design, deploy, and evaluate multi-agent AI systems. The question becomes even more important now that OpenClaw agents are already interacting with each other.

We develop a principled framework for detecting and measuring collective intelligence in groups of large language models (LLMs). Using ideas from information theory, we can tell whether a group of agents is acting in ways that exhibit synergy and complementary roles, or act independently.

The answer depends critically on how the agents are prompted. Adding personas helps, but the real transformation comes from asking agents to reason about what other agents might do. This "Theory of Mind" intervention converts a chaotic crowd into a stable, coordinated collective.

The Experiment: A Coordination Game Without Communication

We study groups of 10 LLM agents playing a coordination game: each agent independently guesses a number, the guesses of the group sum toward a hidden target, and the only feedback is whether the sum was "too high" or "too low." Agents cannot see each other's guesses and don't know how large their group is. This task is hard! Imagine a group of 10 agents needs to guess the mystery number 55. If everyone guesses 5 the sum of their guesses is 50, which is too low. If everyone guesses 6, the sum is 60, which is too high, and the group will oscillate. To succeed, the group must spontaneously develop complementary strategies—some agents guessing high, others low—without ever talking to each other.

This game is a minimal but powerful testbed for emergence. If agents all use the same strategy, they oscillate and fail. Success requires diversity and role differentiation to arise organically from the group dynamics. We ran 600 experiments across three conditions (Plain, Persona, Persona + Theory of Mind) and replicated with four different LLM families.

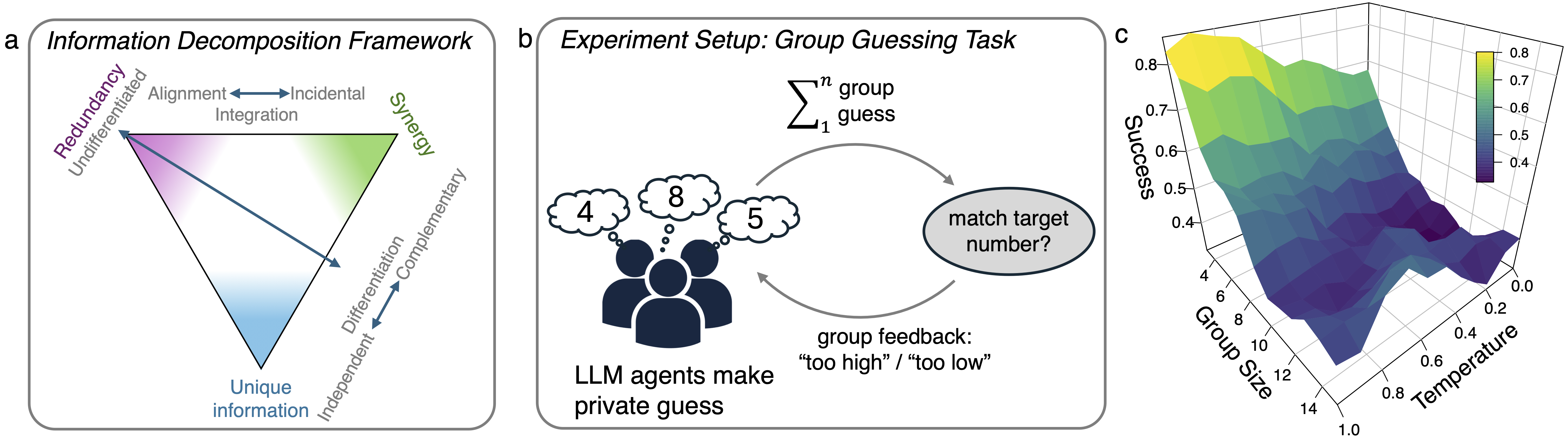

Left: The information decomposition framework distinguishes alignment (shared information) from synergy (complementary information)—the key tension in any multi-agent system. Center: Experiment setup: agents guess independently, sum toward a hidden target, receive only group feedback. Right: Preliminary experiments varying group size and temperature—smaller groups and higher temperature make the task easier.

Emergence Is Real—But Prompts Determine Its Character

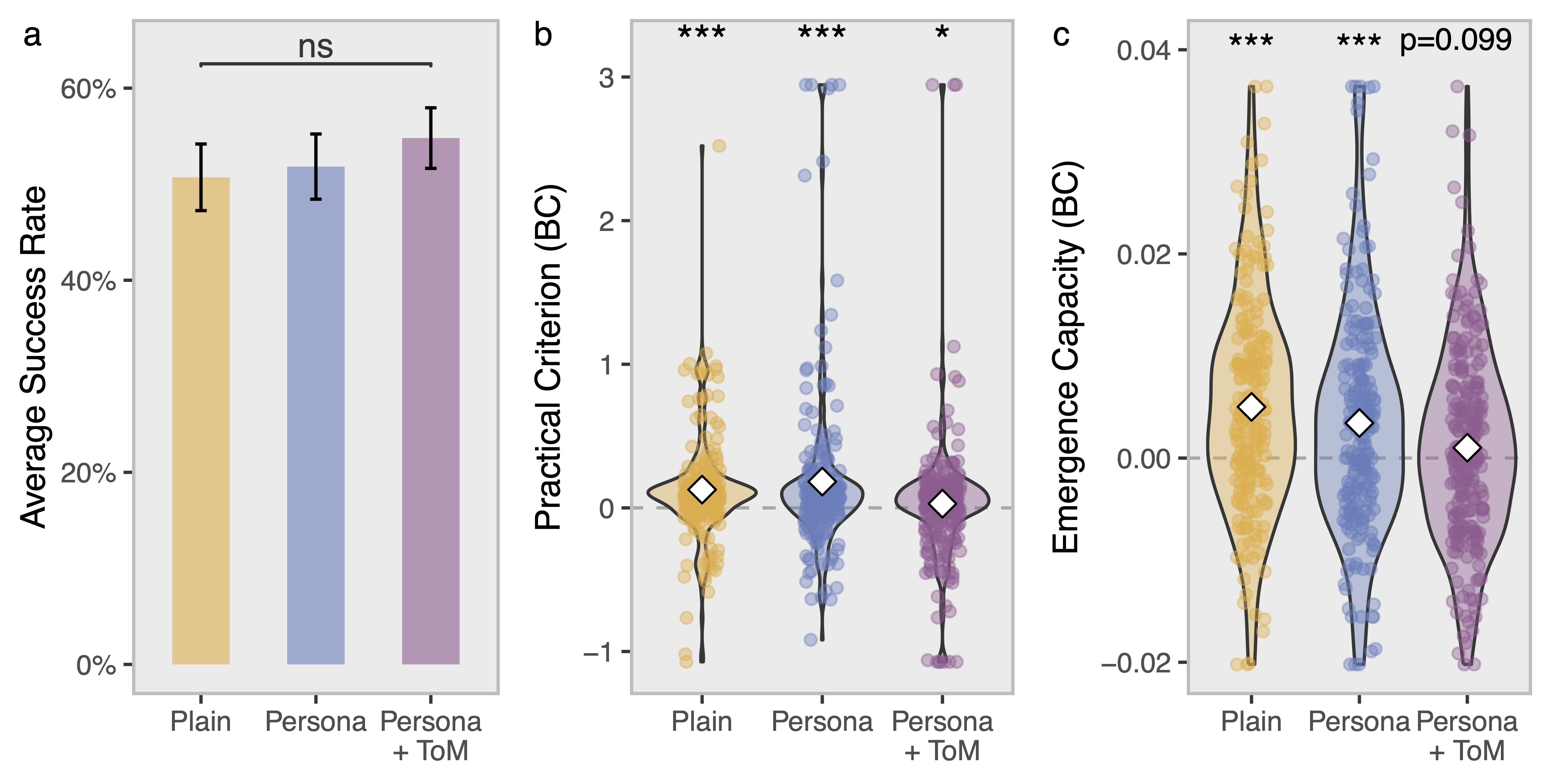

All three conditions show statistical evidence of emergence: the groups are doing something together that cannot be explained by any individual agent's behavior alone. This is a non-trivial finding—it means multi-agent LLM systems genuinely can develop collective properties.

But emergence alone doesn't guarantee good performance. In the Plain and Persona conditions, agents develop short-lived, unstable alignment—they briefly coordinate but fail to sustain it. The groups fall into oscillation. The Theory of Mind (ToM) condition is qualitatively different: it produces strong, sustained emergence capacity, with agents locked into a stable collective dynamic. The group behaves like a coherent unit, not a committee.

Group success rates are similar across conditions (left), but the underlying coordination structure is very different. The practical emergence criterion (center) and emergence capacity (right) are significantly elevated in the ToM condition, indicating deeper collective integration. *** p < 0.001.

Theory of Mind Creates Stable Roles and Genuine Complementarity

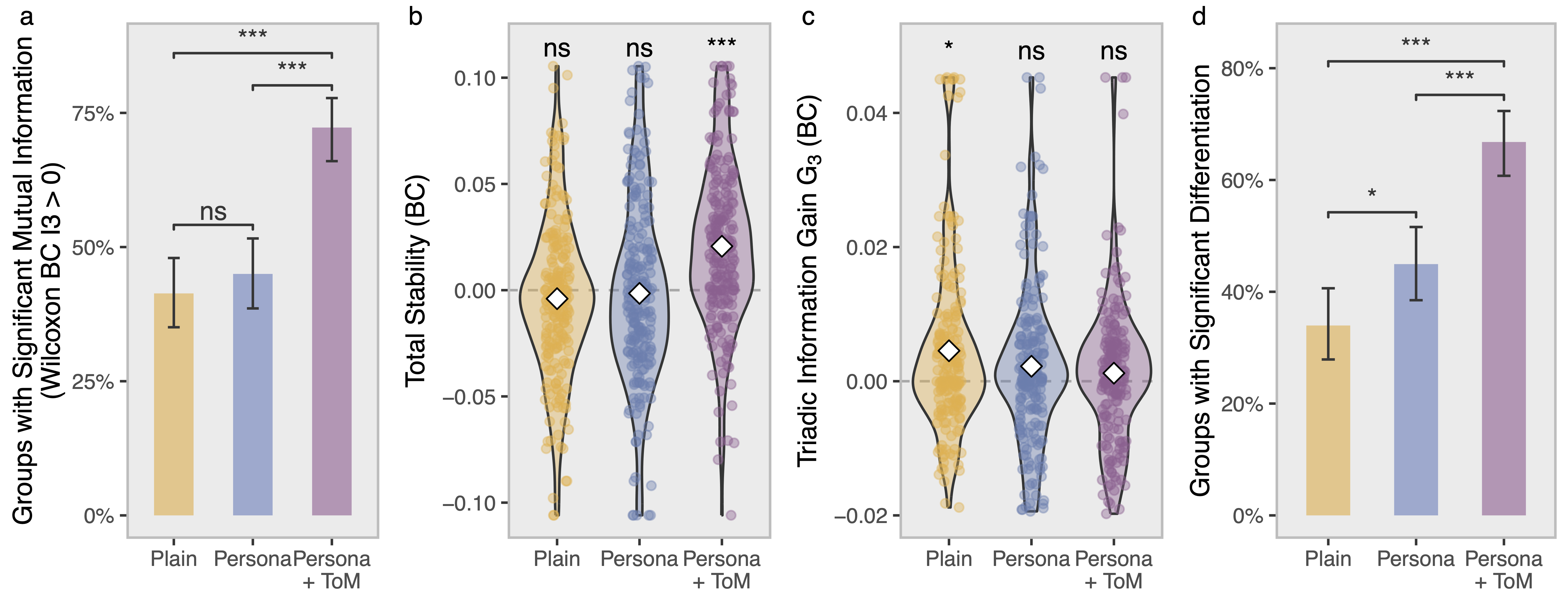

What exactly changes when agents "think about each other"? We find two things happen simultaneously. First, the group stabilizes: agents in the ToM condition develop a stable attractor state—their collective behavior becomes predictable and self-reinforcing; the group has aligned on a shared goal. In Plain and Persona conditions, groups wander chaotically.

Second, agents differentiate into distinct, persistent roles. Rather than all behaving similarly, ToM-prompted agents develop stable identities that shape their contributions round after round. This is not random variation—agents explicitly reference their persona's "personal experience" when reasoning, and their differentiated contributions are sustained across the full episode.

Crucially, neither differentiation alone nor alignment alone is enough. Performance improves when groups achieve both—agents aligned on the shared goal while contributing complementary information. This mirrors a core finding from human group research: effective teams balance integration and diversity.

ToM-prompted groups show significantly more mutual information about the group's future (a), higher total stability (b), and more agent differentiation (d). The coalition information gain G₃ (c) distinguishes goal-directed complementarity from coincidental diversity. *** p < 0.001; * p < 0.05.

Key Findings

Emergence Is Measurable

Multi-agent LLM systems exhibit genuine higher-order structure, detectable with information-theoretic tools. This is robust across multiple models and entropy estimators.

Prompts Change Coordination Regimes

Theory of Mind prompting causally shifts groups from chaotic, unstable dynamics to stable, goal-directed coordination—a qualitative change, not just a quantitative improvement.

Roles Emerge Without Communication

Agents develop stable, differentiated identities from persona + ToM prompts alone—no direct agent-to-agent messaging required. Differentiation is identity-locked, not just noise or learning at different speeds.

Alignment + Complementarity = Performance

Redundancy (alignment on the goal) and synergy (differentiated contributions) interact: each amplifies the benefit of the other, consistent with collective intelligence theory in human groups.

Findings Replicate Across Models

GPT-4.1, Llama-70B, and Gemini all show strong emergence under ToM. Llama-8B largely fails, pointing to a ToM-capacity threshold. Qwen4 reveals a new failure mode: paralysis under coordination ambiguity (check the Appendix for details).

A New Design Principle

Don't just optimize individual agent capability. Optimize for the coordination regime the group enters. Internal structure—how agents relate to each other—determines collective intelligence.

Citation

@inproceedings{riedl2026emergent,

title = {Emergent Coordination in Multi-Agent Language Models},

author = {Riedl, Christoph},

booktitle = {International Conference on Learning Representations},

year = {2026},

url = {https://arxiv.org/abs/2510.05174}

}

Copied!